Good Judgment Is the New Important Skill for Engineers

When AI makes implementation cheaper, the engineers who stand out are the ones who can frame the problem, verify the output, and choose the right compromise.

The bottleneck has moved upstream

A lot of engineers still think the premium skill is speed.

I think that frame is already outdated.

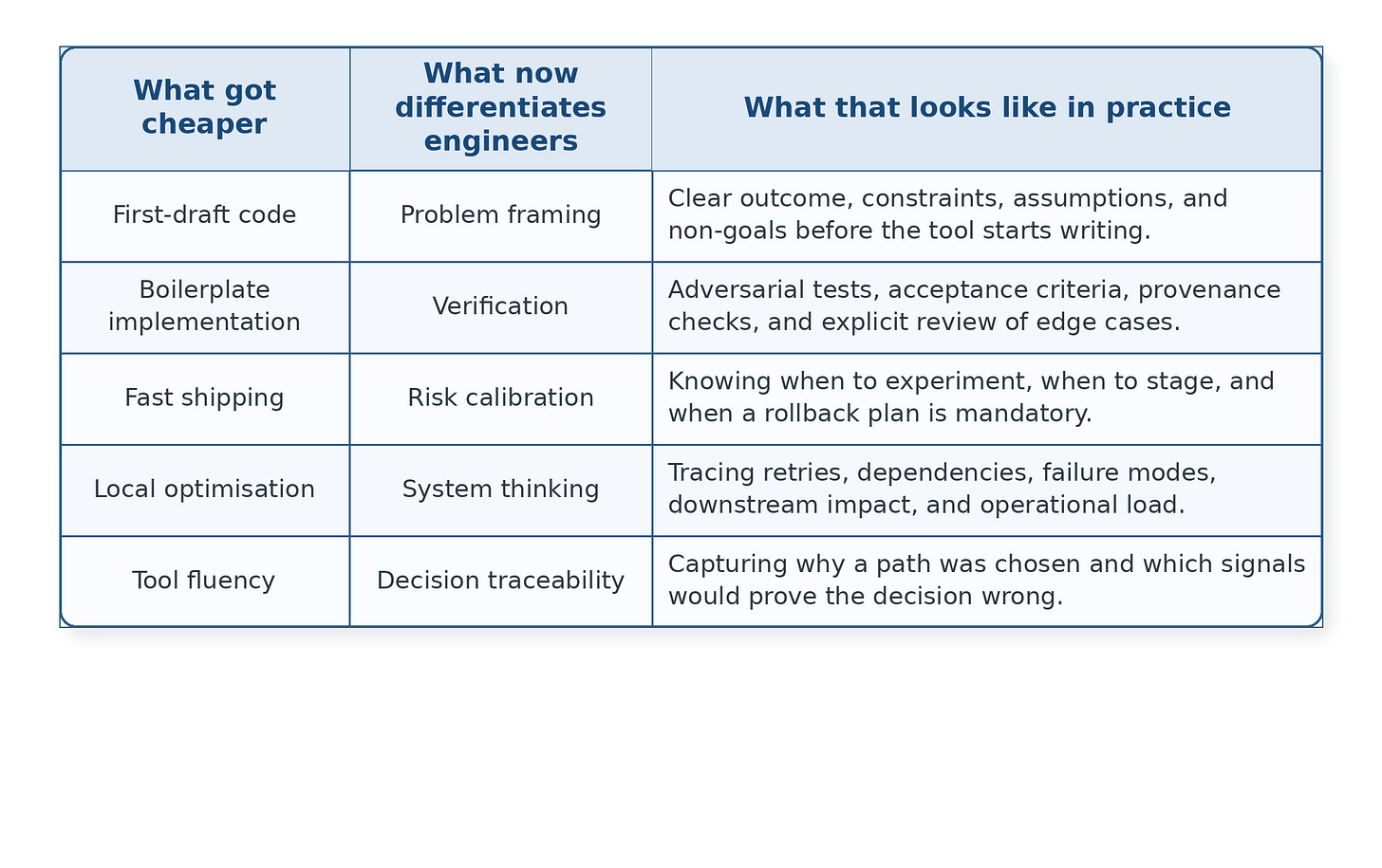

The wrong debate is whether AI will replace engineers. The useful question is which parts of engineering are becoming cheap enough that they stop being a differentiator. Writing the first draft of code is getting cheaper. Spinning up infrastructure is getting cheaper. Generating boilerplate is already cheap enough that nobody serious should confuse it with leverage.

What stays expensive is judgment.

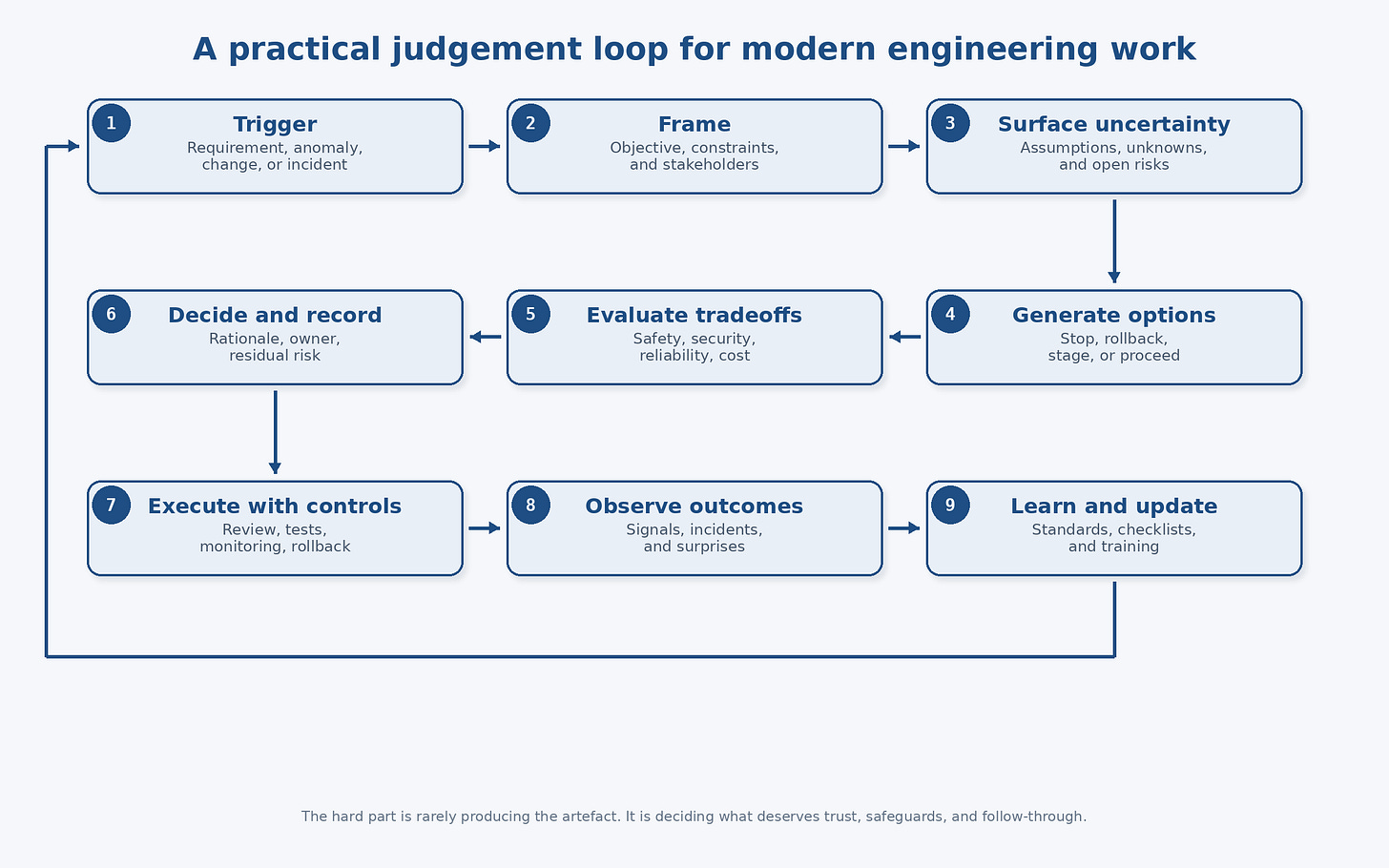

By judgment, I do not mean vague seniority theatre. I mean the habit of making defensible decisions under uncertainty. It is the ability to frame the problem properly, surface the real constraints, spot the second-order effect, choose the least bad tradeoff, and know when a fast answer is actually dangerous.

That has always mattered. The difference now is relative value. When implementation gets easier, decision quality becomes more visible.

This is not just a nice philosophical take. The profession has been signalling this for years. The National Society of Professional Engineers still puts public safety and welfare at the centre of engineering responsibility, including the duty to act when an engineer’s judgment is overruled in dangerous circumstances.[1] ABET’s current engineering criteria explicitly require graduates to make informed judgments and to use engineering judgment when drawing conclusions from experiments and analysis.[2]

So no, judgment is not some fluffy add-on that appears after the real work is done. It is part of the job description.

What has changed is that more of the market can now fake the implementation part for longer.

Why this matters more now

The easiest mistake right now is to confuse output with value.

A tool can produce a plausible pull request in minutes. That does not mean the problem was well framed. It does not mean the change is safe to deploy. It does not mean the edge cases were considered. It definitely does not mean the long-term maintenance cost got any lower.

This sounds obvious, but teams miss it all the time. They celebrate faster artifact creation while quietly offloading more risk onto review, QA, operations, security, and future maintainers.

The Stack Overflow 2025 Developer Survey captured the mood better than most think-pieces did: more developers actively distrust the accuracy of AI tools than trust it, with 46% saying they distrust the output and 33% saying they trust it.[3] That gap matters. It tells you the industry is not struggling with access to generated code. It is struggling with whether generated code deserves confidence.

That is why the bottleneck has moved upstream.

If a junior engineer can generate something that looks competent, then the premium shifts to the person who can answer harder questions. Is this the right abstraction? What breaks under retry? What happens when the input order changes? Which failure is acceptable, and which one gets us paged at 2 a.m.? Are we about to ship a local optimization that creates a system-level mess?

Preference is not performance. A workflow that feels fast in the first ten minutes can be slow over two quarters if it produces brittle decisions.

Table 1. The skill shift inside modern engineering work.

What good judgment actually looks like

Good judgment is usually less glamorous than people want it to be.

It often looks like refusing to be impressed too early.

It looks like asking one more annoying question before approving a design. It looks like noticing that a feature request is really a policy question in disguise. It looks like treating AI output as a draft with a burden of proof, not as a completed answer. It looks like knowing when to stop exploring options and when to reopen the decision because the context changed.

I think good judgment in engineering usually has five parts.

First, problem framing. The team that solves the wrong problem elegantly is still wrong. Strong engineers clarify the real outcome, the real constraints, and the real user harm before they touch the solution.

Second, tradeoff quality. Most meaningful engineering choices are not about perfect versus bad. They are about expensive versus reversible, fast versus auditable, elegant versus operable, and clever versus teachable.

Third, risk calibration. Not every decision deserves ceremony. Not every decision deserves speed either. Good engineers separate reversible from irreversible moves. They know when a quick experiment is fine and when a small mistake can turn into a permanent tax.

Fourth, system awareness. A function can be locally clean and globally stupid. A change that looks harmless inside one service can create retry storms, double sends, broken reports, or compliance gaps downstream.

Fifth, learning discipline. Good judgment is not just the decision itself. It is the quality of the feedback loop after the decision lands.

That last part matters more than teams admit. NIST’s Secure Software Development Framework exists precisely because ordinary software development life cycle models often do not address security in enough detail, which means teams need explicit secure-development practices layered into the work.[4] NIST’s AI Risk Management Framework makes a similar point from the AI side: organizations need structured ways to manage AI risk and make trustworthiness operational, not rhetorical.[5]

In other words, good judgment is not a personality trait. It is a working system.

Figure 1. A practical judgment loop for modern engineering work.

A concrete workflow: the AI-written migration that looks fine until it isn’t

Here is a more realistic example than most benchmark chatter.

Imagine a team needs to change the schema behind a notification system. An engineer asks an AI tool to draft the migration, the backfill logic, the ORM model changes, and a batch job to clean old records. The tool produces something that compiles. Unit tests pass. The diff looks tidy. Everybody feels efficient.

The weak version of engineering stops there.

The stronger version gets irritating in exactly the right way.

Someone asks whether the migration is idempotent. Someone else checks whether the backfill will fight live writes. Another engineer asks what happens if the job is retried halfway through. A product-minded reviewer asks whether message history could become inconsistent for end users during the transition. Ops asks about rollout order, observability, and rollback. Security asks whether the temporary data shape changes access boundaries or audit requirements. A good staff engineer asks the blunt question: do we even need a backfill, or are we trying to preserve a convenience that is not worth the operational risk?

None of that is syntax work.

None of that is model benchmark work.

That is judgment.

The interesting part is that AI tools can make this gap wider, not smaller. They reduce the time between idea and artifact, which is useful. They also make it easier to skip the thinking that should have happened before the artifact existed. The team now has something concrete to react to, so everyone feels like work has progressed. Sometimes it has. Sometimes the team has just become more efficiently wrong.

That is why acceptance criteria are the new code review.

I would rather have an average engineer with sharp acceptance criteria, a rollback plan, explicit assumptions, and a clean monitoring strategy than a brilliant engineer who can generate code quickly and explain afterwards why the blast radius was unforeseeable.

How teams actually train judgment

Teams say they want judgment, then they build environments that reward speed theatre.

If you want better judgment, you need to train it in the open.

The first method is decision visibility. Important decisions should leave a trail: what problem was being solved, which options were considered, why the chosen path won, what assumptions were made, and what signals would tell you the choice was failing. This does not need to become bureaucratic sludge. A short decision record is often enough. The point is to make reasoning inspectable.

The second method is postmortems that are genuinely useful. Not blame rituals. Not timeline fan fiction. Real review of the framing, the assumptions, the missed signals, and the controls that were absent or weak. If the same class of mistake keeps happening, the issue is usually not individual intelligence. It is missing scaffolding.

The third method is scenario work. Put engineers in realistic tradeoff situations. Ask whether they would ship, delay, isolate, rollback, or redesign. Ask what extra evidence they would require. You learn more from that than from asking whether they remember a specific API call.

The fourth method is exposure to consequences. Judgment improves when engineers feel the operational cost of their choices. If one group writes code and another group absorbs every outage, you are training local optimization, not engineering maturity.

The fifth method is managerial honesty. If promotions are still mostly driven by visible output volume, then the organization is telling people what it actually values. You do not get a judgment culture by saying the word judgment a lot.

Ship fast, observe everything, revert faster is still a good rule. But notice what sits inside it: choosing what to ship, what to watch, and what would justify a reversal. Again, the hard part is not typing.

What I would hire and reward for

A lot of interview loops still overweight the part of engineering that is easiest to observe in a compressed setting.

You can see whether someone can produce an answer quickly. You can see whether they know a framework. You can see whether they can speak fluently about architecture patterns. What is harder to see is whether they make cleaner decisions when the information is incomplete and the tradeoffs are ugly.

That is why many teams accidentally hire for confidence and call it judgment.

If I cared about judgment, I would ask candidates to walk through a messy decision. A real one. A rollout that went wrong. A migration they delayed. A time they chose not to build something. I would listen for whether they can name the assumptions they were carrying, the signals they trusted too much, and the point at which they realized the original framing was off.

I would also pay attention to how they talk about constraints. Weak engineers often treat constraints as annoying blockers. Strong engineers use them as design inputs. That difference matters because real work is constraint handling with occasional bursts of typing.

Inside teams, I would reward engineers who make the system easier to reason about. People who reduce ambiguity. People who write decision records that save other people time. People who improve rollback quality, testability, observability, and handoff clarity. Those things do not always look heroic in the moment. They compound anyway.

The teams that win in an AI-heavy environment will not be the ones that generate the most code. They will be the ones that waste the least energy on plausible nonsense.

What I would optimize for now

If I were advising an engineer early in their career, I would still tell them to build real technical depth. You cannot exercise judgment in a domain you do not understand.

But I would also tell them not to confuse technical depth with career insulation.

The engineers who become hard to replace are usually the ones who reduce expensive uncertainty. They turn vague requests into workable plans. They spot the hidden dependency before it becomes an outage. They know when a tool output is good enough, when it needs rewriting, and when the problem should be pushed back on entirely.

They make other people safer.

That is why I think the premium skill has shifted.

Not away from engineering.

Not away from code.

Not into empty “leadership” talk.

It has shifted toward better decisions around the code.

The useful question is not whether AI can write more of the implementation. It clearly can. The useful question is whether your team is getting better at deciding what deserves to exist, what deserves trust, and what deserves a hard no.

Because once code gets cheap, bad judgment gets very expensive.

Notes / references

[1] National Society of Professional Engineers, “NSPE Code of Ethics for Engineers.” https://www.nspe.org/career-growth/nspe-code-ethics-engineers

[2] ABET, “Criteria for Accrediting Engineering Programs, 2025–2026,” Criterion 3 student outcomes. https://www.abet.org/accreditation/accreditation-criteria/criteria-for-accrediting-engineering-programs-2025-2026/

[3] Stack Overflow, “2025 Developer Survey – AI.” https://survey.stackoverflow.co/2025/ai

[4] NIST, “SP 800-218: Secure Software Development Framework (SSDF) Version 1.1.” https://csrc.nist.gov/pubs/sp/800/218/final

[5] NIST, “Artificial Intelligence Risk Management Framework (AI RMF 1.0).” https://www.nist.gov/publications/artificial-intelligence-risk-management-framework-ai-rmf-10